As some of you may already know, it’s getting to be crunch time for me on my PhD. So for the next little bit, my blog posts will probably mostly be on things that directly related to my dissertation. Consider this your behind-the-scenes pass to the research process.

With that in mind, today we’ll be looking at some work that’s closely related to my own.(And, not coincidentally, that I need to include in my lit review. Twofer.) They all share a common thread: social categories and speech perception. I’ve talked about this before, but these are more recent peer-reviewed papers on the topic.

Vowel perception by listeners from different English dialects

In this paper, Karpinska and coauthors investigated the role of a listener’s regional dialect on their use of two acoustic cues: formats and duration.

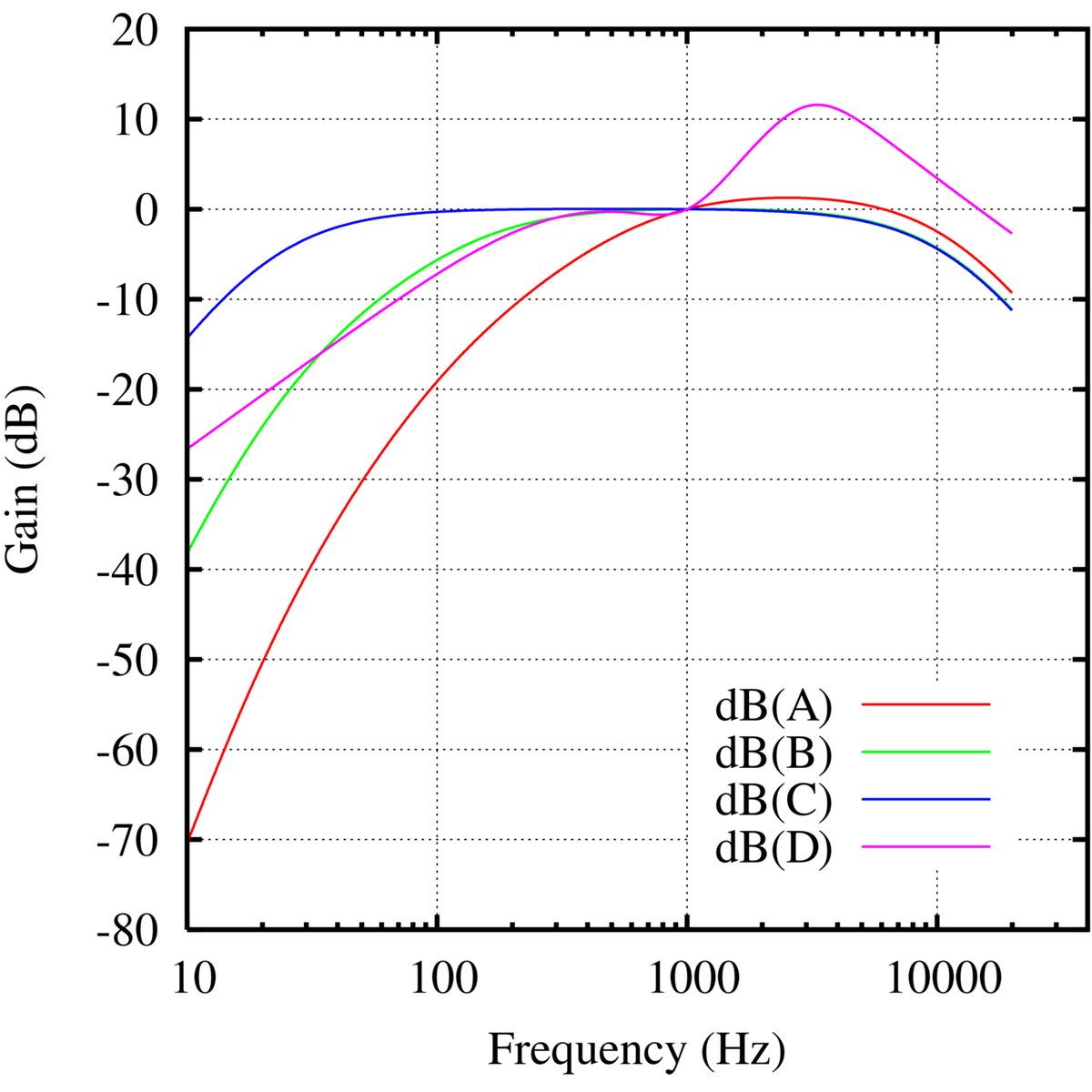

An acoustic cue is a specific part of the speech signal that you pay attention to to help you decide what sound you’re hearing. For example, when listening to a fricative, like “s” or “sh”, you pay a lot of attention to the high-pitched, staticy-sounding part of the sound to tell which fricative you’re hearing. This cue helps you tell the difference between “sip” and “ship”, and if it gets removed or covered by another sound you’ll have a hard time telling those words apart.

They found that for listeners from the UK, New Zealand, Ireland and Singapore, formants were the most important cue distinguishing the vowels in “bit” and “beat”. For Australian listeners, however, duration (how long the vowel was) was a more important cue to the identity of these vowels. This study provides additional evidence that a listener’s dialect affects their speech perception, and in particular which cues they rely on.

Social categories are shared across bilinguals׳ lexicons

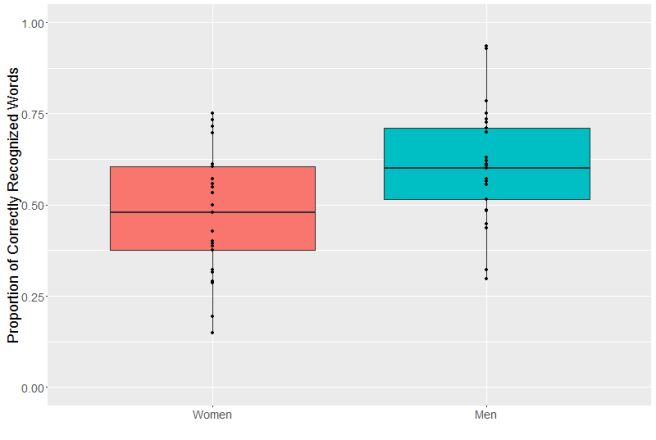

In this experiment Szakay and co-authors looked at English-Māori bilinguals from New Zealand. In New Zealand, there are multiple dialects of English, including Māori English (the variety used by Native New Zealanders) and Pākehā English (the variety used by white New Zealanders). The authors found that there was a cross-language priming effect from Māori to English, but only for Māori English.

Priming is a phenomena in linguistics where hearing or saying a particular linguistic unit, like a word, later makes it easier to understand or say a similar unit. So if I show you a picture of a dog, you’re going to be able to read the word “puppy” faster than you would have otherwise becuase you’re already thinking about canines.

They argue that this is due to the activation of language forms associated with a specific social identity–in this case Māori ethnicity. This provides evidence that listener’s beliefs about a speaker’s social identity affects their processing.

Intergroup Dynamics in Speech Perception: Interaction Among Experience, Attitudes and Expectations

Nguyen and co-authors investigate the effects of three separate factors on speech perception:

- Experience: How much prior interaction a listener has had with a given speech variety.

- Attitudes: How a listener feels about a speech variety and those who speak it.

- Expectations: Whether a listener knows what speech variety they’re going to hear. (In this case only some listeners were explicitly told what speech variety they were going to hear.)

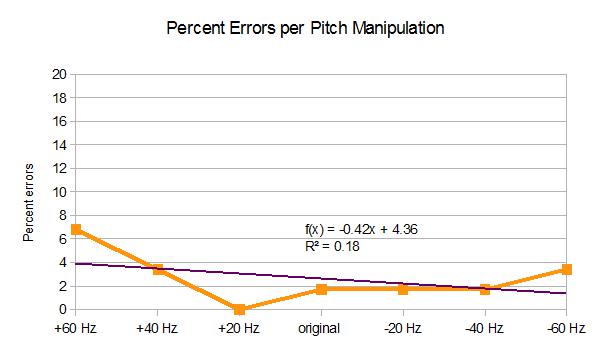

They found that these three factors all influenced the speech perception of Australian English speakers listening to Vietnamese accented English, and that there was an interaction between these factors. In general, participants with correct expectations (i.e. being told beforehand that they were going to hear Vietnamese accented English) identified more words correctly.

There was an interesting interaction between listener’s attitudes towards Asians and thier experience with Vietnamese accented English. Listeners who had negative prejudices towards Asians and little experience with Vietnamese English were more accurate than those with little experience and positive prejudice. The authors suggest that that was due to listeners with negative prejudice being more attentive. However, the opposite effect was found for listener’s with experience listening to Vietnamese English. In this group, positive prejudice increase accuracy while negative prejudice decreased it. There were, however, uneven numbers of participants between the groups so this might have skewed the results.

For me, this study is most useful becuase it shows that a listener’s experience with a speech variety and their expectation of hearing it affect their perception. I would, however, like to see a larger listener sample, especially given the strong negative skew in listner’s attitudes towards Asians (which I realize the researchers couldn’t have controlled for).

Perceiving the World through Group-Colored Glasses: A Perceptual Model of Intergroup Relations

Rather than presenting an experiment, this paper lays out a framework for the interplay of social group affiliation and perception. The authors pull together numerous lines of research showing that an individual’s own group affiliation can change thier perception and interpretation of the same stimuli. In the authors’ own words:

The central premise of our model is that social identification influences perception.

While they discuss perception across many domains (visual, tactile, orlfactory, etc.) the part which directly fits with my work is that of auditory perception. As they point out, auditory perception of speech depends on both bottom up and top down information. Bottom-up information, in speech perception, is the acoustic signal, while top-down information includes both knowledge of the language (like which words are more common) and social knowledge (like our knowledge of different dialects). While the authors do not discuss dialect perception directly, other work (including the three studies discussed above) fits nicely into this framework.

The key difference between this framework and Kleinschmidt & Jaeger’s Robust Speech Perception model is the centrality of the speaker’s identity. Since all language users have thier own dialect which affects their speech perception (although, of course, some listeners can fluently listen to more than one dialect) it is important to consider both the listener’s and talker’s social affiliation when modelling speech perception.