Today’s blog post is a bit different. It’s in dance!

If that wasn’t quite clear enough for you, you can check this blog post for a more detailed explanation.

Today’s blog post is a bit different. It’s in dance!

If that wasn’t quite clear enough for you, you can check this blog post for a more detailed explanation.

If you’ve been following my blog for a while, you may remember that last year I found that YouTube’s automatic captions didn’t work as well for some dialects, or for women. The effects I found were pretty robust, but I wanted to replicate them for a couple of reasons:

With that in mind, I did a second analysis on both YouTube’s automatic captions and Bing’s speech API (that’s the same tech that’s inside Microsoft’s Cortana, as far as I know).

For this project, I used speech data from the International Dialects of English Archive. It’s a collection of English speech from all over, originally collected to help actors sound more realistic.

I used speech data from four varieties: the South (speakers from Alabama), the Northern Cities (Michigan), California (California) and General American. “General American” is the sort of news-caster style of speech that a lot of people consider unaccented–even though it’s just as much an accent as any of the others! You can hear a sample here.

For each variety, I did an acoustic analysis to make sure that speakers I’d selected actually did use the variety I thought they should, and they all did.

For the YouTube captions, I just uploaded the speech files to YouTube as videos and then downloaded the subtitles. (I would have used the API instead, but when I was doing this analysis there was no Python Google Speech API, even though very thorough documentation had already been released.)

Bing’s speech API was a little more complex. For this one, my co-author built a custom Android application that sent the files to the API & requested a long-form transcript back. For some reason, a lot of our sound files were returned as only partial transcriptions. My theory is that there is a running confidence function for the accuracy of the transcription, and once the overall confidence drops below a certain threshold, you get back whatever was transcribed up to there. I don’t know if that’s the case, though, since I don’t have access to their source code. Whatever the reason, the Bing transcriptions were less accurate overall than the YouTube transcriptions, even when we account for the fact that fewer words were returned.

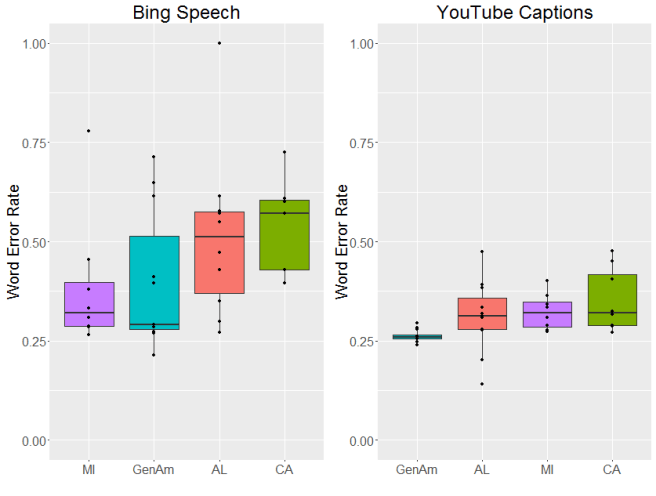

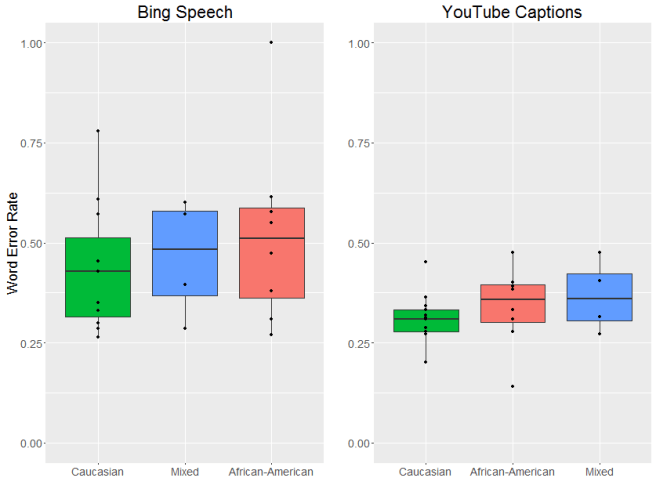

OK, now to the results. Let’s start with dialect area. As you might be able to tell from the graphs below, there were pretty big differences between the two systems we looked at. In general, there was more variation in the word error rate for Bing and overall the error rate tended to be a bit higher (although that could be due to the incomplete transcriptions we mentioned above). YouTube’s captions were generally more accurate and more consistent. That said, both systems had different error rates across dialects, with the lowest average error rates for General American English.

So why did I find an effect last time? My (untested) hypothesis is that there was a difference in the signal to noise ratio for male and female speakers in the user-uploaded files. Since women are (on average) smaller and thus (on average) slightly quieter when they speak, it’s possible that their speech was more easily masked by background noises, like fans or traffic. These files were all recorded in a quiet place, however, which may help to explain the lack of difference between genders.

There are two things I’m really worried about with these types of speech recognition errors. The first is higher error rates seem to overwhelmingly affect already-disadvantaged groups. In the US, strong regional dialects tend to be associated with speakers who aren’t as wealthy, and there is a long and continuing history of racial discrimination in the United States.

Given this, the second thing I’m worried about is the fact that these voice recognition systems are being incorporated into other applications that have a real impact on people’s lives.

Every automatic speech recognition system makes errors. I don’t think that’s going to change (certainly not in my lifetime). But I do think we can get to the point where those error don’t disproportionately affect already-marginalized people. And if we keep using automatic speech recognition into high-stakes situations it’s vital that we get to that point quickly and, in the meantime, stay aware of these biases.

If you’re interested in the long version, you can check out the published paper here.

First, the linguist’s answer: none. Zero. Everyone who uses a language uses a variety of that language, one that reflects their social identity–including things like gender, socioeconomic status or regional background.

But the truth is that some people, especially in the US, have the social privileged of being considered “unaccented”. I can’t count how many times I’ve been “congratulated” by new acquaintances on having “gotten rid of” my Virginia accent. The thing is, I do have a lot of linguistic features from Tidewater/Piedmont English, like a strong distinction between the vowels in “body” and “baudy”, “y’all” for the second person plural and calling a drive-through liquor store a “brew thru” (shirts with this guy on them were super popular in my high school). But, at the same time, I also don’t have a lot of strongly stigmatized features, like dropping r’s or strong monopthongization you’d hear from a speaker like Virgil Goode (although most folks don’t really sound like that anymore). Plus, I’m young, white, (currently) urban and really highly educated. That, plus the fact that most people don’t pick up on the Southern features I do have, means that I have the privilege of being perceived as accent-less.

But how many people in the US are in the same boat as I am? This is a difficult question, especially given that there is no wide consensus about what “standard”, or “unaccented”, American English is. There is, however, a lot of discussion about what it’s not. In particular, educated speakers from the Midwest and West are generally considered to be standard speakers by non-linguists. Non-linguists also generally don’t consider speakers of African American English and Chicano English to be “standard” speakers (even though both of these are robust, internally consistent language varieties with long histories used by native English speakers). Fortunately for me, the United States census asks census-takers about their language background, race and ethnicity, educational attainment and geographic location, so I could use census data to roughly estimate how many speakers of “standard” English there are in the United States. I chose to use the 2011 census, as detailed data on language use has been released for that year on a state-by-state basis (you can see a summary here).

From this data, I calculated how many individuals were living in states assigned by the U.S. Census Bureau to either the West or Midwest and how many residents surveyed in these states reported speaking English ‘very well’ or better. Then, assuming that residents of these states had educational attainment rates representative of national averages, I estimated how many college educated (with a bachelor’s degree or above) non-Black and non-Hispanic speakers lived in these areas.

So just how many speakers fit into this “standard” mold? Fewer than you might expect! You can see the breakdown below:

|

Speakers in the 2011 census who… |

Count |

% of US Population |

|

…live in the United States… |

311.7 million |

100% |

|

…and live in the Midwest or West… |

139,968,791 |

44.9% |

|

…and speak English at least ‘very well’… |

127,937,178 |

41% |

|

…and are college educated… |

38,381,153 (estimated) |

12.31% |

|

…and are not Black or Hispanic. |

33,391,603 (estimated) |

10.7% |

I’ll get back to “a male/a female” question in my next blog post (promise!), but for now I want to discuss some of the findings from my dissertation research. I’ve talked about my dissertation research a couple times before, but since I’m going to be presenting some of it in Spain (you can read the full paper here), I thought it would be a good time to share some of my findings.

In my dissertation, I’m looking at how what you think you know about a speaker affects what you hear them say. In particular, I’m looking at American English speakers who have just learned to correctly identify the vowels of New Zealand English. Due to an on-going vowel shift, the New Zealand English vowels are really confusing for an American English speaker, especially the vowels in the words “head”, “head” and “had”.

These overlaps can be pretty confusing when American English speakers are talking to New Zealand English speakers, as this Flight of the Conchords clip shows!

The good news is that, as language users, we’re really good at learning new varieties of languages we already know, so it only takes a couple minutes for an American English speaker to learn to correctly identify New Zealand English vowels. My question was this: once an American English speaker has learned to understand the vowels of New Zealand English, how do they know when to use this new understanding?

In order to test this, I taught twenty one American English speakers who hadn’t had much, if any, previous exposure to New Zealand English to correctly identify the vowels in the words “head”, “heed” and “had”. While I didn’t play them any examples of a New Zealand “hid”–the vowel in “hid” is said more quickly in addition to having different formants, so there’s more than one way it varies–I did let them say that they’d heard “hid”, which meant I could tell if they were making the kind of mistakes you’d expect given the overlap between a New Zealand “head” and American “hid”.

So far, so good: everyone quickly learned the New Zealand English vowels. To make sure that it wasn’t that they were learning to understand the one talker they’d been listening to, I tested half of my listeners on both American English and New Zealand English vowels spoken by a second, different talker. These folks I told where the talker they were listening to was from. And, sure enough, they transferred what they’d learned about New Zealand English to the new New Zealand speaker, while still correctly identifying vowels in American English.

The really interesting results here, though, are the ones that came from the second half the listeners. This group I lied to. I know, I know, it wasn’t the nicest thing to do, but it was in the name of science and I did have the approval of my institutional review board, (the group of people responsible for making sure we scientists aren’t doing anything unethical).

In an earlier experiment, I’d played only New Zealand English as this point, and when I told them the person they were listening to was from America, they’d completely changed the way they listened to those vowels: they labelled New Zealand English vowels as if they were from American English, even though they’d just learned the New Zealand English vowels. And that’s what I found this time, too. Listeners learned the New Zealand English vowels, but “undid” that learning if they thought the speaker was from the same dialect as them.

But what about when I played someone vowels from their own dialect, but told them the speaker was from somewhere else? In this situation, listeners ignored my lies. They didn’t apply the learning they’d just done. Instead, the correctly treated the vowels of thier own dialect as if they were, in fact, from thier dialect.

At first glance, this seems like something of a contradiction: I just said that listeners rely on social information about the person who’s talking, but at the same time they ignore that same social information.

So what’s going on?

I think there are two things underlying this difference. The first is the fact that vowels move. And the second is the fact that you’ve heard a heck of a lot more of your own dialect than one you’ve been listening to for fifteen minutes in a really weird training experiment.

So what do I mean when I say vowels move? Well, remember when I talked about formants above? These are areas of high acoustic energy that occur at certain frequency ranges within a vowel and they’re super important to human speech perception. But what doesn’t show up in the plot up there is that these aren’t just static across the course of the vowel–they move. You might have heard of “diphthongs” before: those are vowels where there’s a lot of formant movement over the course of the vowel.

And the way that vowels move is different between different dialects. You can see the differences in the way New Zealand and American English vowels move in the figure below. Sure, the formants are in different places—but even if you slid them around so that they overlapped, the shape of the movement would still be different.

Ok, so the vowels are moving in different ways. But why are listeners doing different things between the two dialects?

Well, remember how I said earlier that you’ve heard a lot more of your own dialect than one you’ve been trained on for maybe five minutes? My hypothesis is that, for the vowels in your own dialect, you’re highly attuned to these movements. And when a scientist (me) comes along and tells you something that goes against your huge amount of experience with these shapes, even if you do believe them, you’re so used to automatically understanding these vowels that you can’t help but correctly identify them. BUT if you’ve only heard a little bit of a new dialect you don’t have a strong idea of what these vowels should sound like, so if you’re going to rely more on the other types of information available to you–like where you’re told the speaker is from–even if that information is incorrect.

So, to answer the question I posed in the title, can what you think you know about someone affect how you hear them? Yes… but only if you’re a little uncertain about what you heard in the first place, perhaps becuase it’s a dialect you’re unfamiliar with.

So I recently had a pretty disconcerting experience. It turns out that almost no one else has heard of a word that I thought was pretty common. And when I say “no one” I’m including dialectologists; it’s unattested in the Oxford English Dictionary and the Dictionary of American Regional English. Out of the twenty two people who responded to my Twitter poll (which was probably mostly other linguists, given my social networks) only one other person said they’d even heard the word and, as I later confirmed, it turned out to be one of my college friends.

So what is this mysterious word that has so far evaded academic inquiry? Ladies, gentlemen and all others, please allow me to introduce you to…

The word means something like “fool” or “incompetent person”. To prove that this is actually a real word that people other than me use, I’ve (very, very laboriously) found some examples from the internet. It shows up in the comments section of this news article:

THAT is why people are voting for Mr Trump, even if he does act sometimes like a Bumpus.

I also found it in a smattering of public tweets like this one:

If you ever meet my dad, please ask him what a “bumpus” is

And this one:

Having seen horror of war, one would think, John McCain would run from war. No, he runs to war, to get us involved. What a bumpus.

And, my personal favorite, this one:

because the SUN(in that pic) is wearing GLASSES god karen ur such a bumpus

There’s also an Urban Dictionary entry which suggests the definition:

A raucous, boisterous person or thing (usually african-american.)

I’m a little sceptical about the last one, though. Partly because it doesn’t line up with my own intuitions (I feel like a bumpus is more likely to be silent than rowdy) and partly becuase less popular Urban Dictionary entries, especially for words that are also names, are super unreliable.

I also wrote to my parents (Hi mom! Hi dad!) and asked them if they’d used the word growing up, in what contexts, and who they’d learned it from. My dad confirmed that he’d heard it growing up (mom hadn’t) and had a suggestion for where it might have come from:

I am pretty sure my dad used it – invariably in one of the two phrases [“don’t be a bumpus” or “don’t stand there like a bumpus”]…. Bumpass, Virginia is in Lousia County …. Growing up in Norfolk, it could have held connotations of really rural Virginia, maybe, for Dad.

While this is definitely a possibility, I don’t know that it’s definitely the origin of the word. Bumpass, Virginia, like Bumpass Hell (see this review, which also includes the phrase “Don’t be a bumpass”), was named for an early settler. Interestingly, the college friend mentioned earlier is also from the Tidewater region of Virginia, which leads me to think that the word may have originated there.

My mom offered some other possible origins, that the term might be related to “country bumpkin” or “bump on a log”. I think the latter is especially interesting, given that “bump on a log” and “bumpus” show up in exactly the same phrase: standing/sitting there like a _______.

She also suggested it might be related to “bumpkis” or “bupkis”. This is a possibility, especially since that word is definitely from Yiddish and Norfolk, VA does have a history of Jewish settlement and Yiddish speakers.

A usage of “Bumpus” which seems to be the most common is in phrases like “Bumpus dog” or “Bumpus hound”. I think that this is probably actually a different use, though, and a direct reference to a scene from the movie A Christmas Story:

One final note is that there was a baseball pitcher in the late 1890’s who went by the nickname “Bumpus”: Bumpus Jones. While I can’t find any information about where the nickname came from, this post suggests that his family was from Virginia and that he had Powhatan ancestry.

I’m really interesting in learning more about this word and its distribution. My intuition is that it’s mainly used by older, white speakers in the South, possibly centered around the Tidewater region of Virginia.

So one of my side projects is looking at what people are doing when they choose to spell something differently–what sort of knowledge about language are we encoding when we decide to spell “talk” like “tawk”, or “playing” like “pleying”? Some of these variant spelling probably don’t have anything to do with pronunciation, like “gawd” or “dawg”, which I think are more about establishing a playful, informal tone. But I think that some variant spellings absolutely are encoding specific pronunciation. Take a look at this tweet, for example (bolding mine):

What a devastating gut wrenching loss for Michigan. But dats spawts, dats life.

— SpawtsChat (@legitsportstalk) October 17, 2015

There are three different spelling here, two which look like th-stopping (where the “th” sound as in “that” is produced as a “d” sound instead) and one that looks like r-lessness (where someone doesn’t produce the r sound in some words). But unfortunately I don’t have a recording of the person who wrote this tweet; there’s no way I can know if they produce these words in the same way in their speech as they do when typing.

Fortunately, I was able to find someone who 1) uses variant spellings in their Twitter and 2) I could get a recording of:

This let me directly compare how this particular speaker tweets to how they speak. So what did I find? Do they tweet the same way they speak? It turns out that that actually depends.

So what’s going on? Why are only some things being used in the same way on Twitter and in speech? To answer that we’ll need to dig a little deeper into the way these things in speech.

So there’s a pretty robust pattern showing up here. This person is only tweeting the way they speak for a very small set of things: those things that are really strongly associated with this dialect and that they’re really playing up in thier speech. In other words, they tend to use the things that they’re paying a lot of attention to in the same way both in speech and on Twitter. That makes sense. If you’re very careful to do something when you’re talking–not splitting an infinitive or ending a sentence with a preposition, maybe–you’re probably not going to do it when you’re talking. But if there’s something that you do all the time when you’re talking and aren’t really aware of then it probably show up in your writing. For example, there are lots of little phrases I’ll use in my speech (like “no worries”, for example) that I don’t think I’ve ever written down, even in really informal contexts. (Except for here, obviously.)

So the answer to whether tweets and speech act the same way is… is depends. Which is actually really useful! Since it looks like it’s only the things that people are paying a lot of attention to that get overshot in speech and Twitter, this can help us figure out what things people think are really important by looking at how they use them on Twitter. And that can help us understand what it is that makes a dialect sound different, which is useful for things like dialect coaching, language teaching and even helping computers understand multiple dialects well.

(BTW, If you’re interested in more details on this project, you can see my poster, which I’ll be presenting at NWAV44 this weekend, here.)

I’m writing this blog post from a cute little tea shop in Victoria, BC. I’m up here to present at the Northwest Linguistics Conference, which is a yearly conference for both Canadian and American linguists (yes, I know Canadians are Americans too, but United Statsian sounds weird), and I thought that my research project may be interesting to non-linguists as well. Basically, I investigated whether it’s possible for Twitter users to “type with an accent”. Can linguists use variant spellings in Twitter data to look at the same sort of sound patterns we see in different speech communities?

So if you’ve been following the Great Ideas in Linguistics series, you’ll remember that I wrote about sociolinguistic variables a while ago. If you didn’t, sociolinguistic variables are sounds, words or grammatical structures that are used by specific social groups. So, for example, in Southern American English (representing!) the sound in “I” is produced with only one sound, so it’s more like “ah”.

Now, in speech these sociolinguistic variables are very well studied. In fact, the Dictionary of American Regional English was just finished in 2013 after over fifty years of work. But in computer mediated communication–which is the fancy term for internet language–they haven’t been really well studied. In fact, some scholars suggested that it might not be possible to study speech sounds using written data. And on the surface of it, that does make sense. Why would you expect to be able to get information about speech sounds from a written medium? I mean, look at my attempt to explain an accent feature in the last paragraph. It would be far easier to get my point across using a sound file. That said, I’d noticed in my own internet usage that people were using variant spellings, like “tawk” for “talk”, and I had a hunch that they were using variant spellings in the same way they use different dialect sounds in speech.

While hunches have their place in science, they do need to be verified empirically before they can be taken seriously. And so before I submitted my abstract, let alone gave my talk, I needed to see if I was right. Were Twitter users using variant spellings in the same way that speakers use different sound patterns? And if they are, does that mean that we can investigate sound patterns using Twitter data?

Since I’m going to present my findings at a conference and am writing this blog post, you can probably deduce that I was right, and that this is indeed the case. How did I show this? Well, first I picked a really well-studied sociolinguistic variable called the low back merger. If you don’t have the merger (most African American speakers and speakers in the South don’t) then you’ll hear a strong difference between the words “cot” and “caught” or “god” and “gaud”. Or, to use the example above, you might have a difference between the words “talk” and “tock”. “Talk” is little more backed and rounded, so it sounds a little more like “tawk”, which is why it’s sometimes spelled that way. I used the Twitter public API and found a bunch of tweets that used the “aw” spelling of common words and then looked to see if there were other variant spellings in those tweets. And there were. Furthermore, the other variant spellings used in tweets also showed features of Southern American English or African American English. Just to make sure, I then looked to see if people were doing the same thing with variant spellings of sociolinguistic variables associated with Scottish English, and they were. (If you’re interested in the nitty-gritty details, my slides are here.)

Ok, so people will sometimes spell things differently on Twitter based on their spoken language dialect. What’s the big deal? Well, for linguists this is pretty exciting. There’s a lot of language data available on Twitter and my research suggests that we can use it to look at variation in sound patterns. If you’re a researcher looking at sound patterns, that’s pretty sweet: you can stay home in your jammies and use Twitter data to verify findings from your field work. But what if you’re not a language researcher? Well, if we can identify someone’s dialect features from their Tweets then we can also use those features to make a pretty good guess about their demographic information, which isn’t always available (another problem for sociolinguists working with internet data). And if, say, you’re trying to sell someone hunting rifles, then it’s pretty helpful to know that they live in a place where they aren’t illegal. It’s early days yet, and I’m nowhere near that stage, but it’s pretty exciting to think that it could happen at some point down the line.

So the big take away is that, yes, people can tweet with an accent, and yes, linguists can use Twitter data to investigate speech sounds. Not all of them–a lot of people aren’t aware of many of their dialect features and thus won’t spell them any differently–but it’s certainly an interesting area for further research.

Since I’m teaching Language and Society this quarter, this is a question that I anticipate coming up early and often. Accents–or dialects, though the terms do differ slightly–are one of those things in linguistics that is effortlessly fascinating. We all have experience with people who speak our language differently than we do. You can probably even come up with descriptors for some of these differences. Maybe you feel that New Yorkers speak nasally, or that Southerners have a drawl, or that there’s a certain Western twang. But how did these differences come about and how are perpetuated?

First, two myths I’d like to dispel.

Now that that’s done with, let’s turn to how we get accents in the first place. To begin with, we can think of an accent as a collection of linguistic features that a group of people share. By themselves, these features aren’t necessarily immediately noticeable, but when you treat them as a group of factors that co-varies it suddenly becomes clearer that you’re dealing with separate varieties. Which is great and all, but let’s pull out an example to make it a little clearer what I mean.

Imagine that you have two villages. They’re relatively close and share a lot of commerce and have a high degree of intermarriage. This means that they talk to each other a lot. As a new linguistic change begins to surface (which, as languages are constantly in flux, is inevitable) it spreads through both villages. Let’s say that they slowly lose the ‘r’ sound. If you asked a person from the first village whether a person from the second village had an accent, they’d probably say no at that point, since they have all of the same linguistic features.

But what if, just before they lost the ‘r’ sound, an unpassable chasm split the two villages? Now, the change that starts in the first village has no way to spread to the second village since they no longer speak to each other. And, since new linguistic forms pretty much come into being randomly (which is why it’s really hard to predict what a language will sound like in three hundred years) it’s very unlikely that the same variant will come into being in the second village. Repeat that with a whole bunch of new linguistic forms and if, after a bridge is finally built across the chasm, you ask a person from the first village whether a person from the second village has an accent, they’ll probably say yes. They might even come up with a list of things they say differently: we say this and they say that. If they were very perceptive, they might even give you a list with two columns: one column the way something’s said in their village and the other the way it’s said in the second village.

But now that they’ve been reunited, why won’t the accents just disappear as they talk to each other again? Well, it depends, but probably not. Since they were separated, the villages would have started to develop their own independent identities. Maybe the first village begins to breed exceptionally good pigs while squash farming is all the rage in the second village. And language becomes tied that that identity. “Oh, I wouldn’t say it that way,” people from the first village might say, “people will think I raise squash.” And since the differences in language are tied to social identity, they’ll probably persist.

Obviously this is a pretty simplified example, but the same processes are constantly at work around us, at both a large and small scale. If you keep an eye out for them, you might even notice them in action.

Short answer: they’re all correct (at least in the United States) but some are more common in certain dialectal areas. Here’s a handy-dandy map, in case you were wondering:

Long answer: I’m going to sort this into reactions I tend to get after answering questions like this one.

What do you mean they’re all correct? Coke/Soda/Pop is clearly wrong. Ok, I’ll admit, there are certain situations when you might need to choose to use one over the other. Say, if you’re writing for a newspaper with a very strict style guide. But otherwise, I’m sticking by my guns here: they’re all correct. How do I know? Because each of them in is current usage, and there is a dialectal group where it is the preferred term. Linguistics (at least the type of linguistics that studies dialectal variation) is all about describing what people actually say and people actually say all three.

But why doesn’t everyone just say the same thing? Wouldn’t that be easier? Easier to understand? Probably, yes. But people use different words for the same thing for the same reasons that they speak different languages. In a very, very simplified way, it kinda works like this:

This is the process by which languages or dialectal communities tend to diverge. Divergence isn’t the only pressure on speakers, however. Particularly since we can now talk to and listen to people from basically anywhere (Yay internet! Yay TV! Yay radio!) your speech community could look like mine does: split between people from the Pacific Northwest and the South. My personal language use is slowly drifting from mostly Southern to a mix of Southern and Pacific Northwestern. This is called dialect leveling and it’s part of the reason why American dialectal regions tend include hundreds or thousands of miles instead of two or three.

Dialect leveling: Where two or more groups of people start out talking differently and end up talking alike. Schools tend to be a huge factor in this.

So, on the one hand, there is pressure to start all talking alike. On the other hand, however, I still want to sound like I belong with my Southern friends and have them understand me easily (and not be made fun of for sounding strange, let’s be honest) so when I’m talking to them I don’t retain very many markers of the Pacific Northwest. That’s pressure that’s keeping the dialect areas separate and the reason why I still say “soda”, even though I live in a “pop” region.

Huh. That’s pretty cool. Yep. Yep, it sure is.